Entropy

| Thermodynamics | ||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

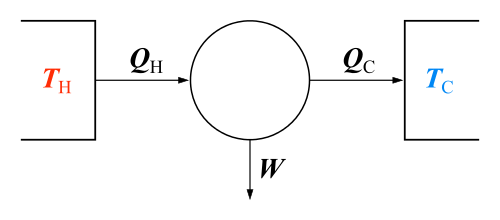

The classical Carnot heat engine | ||||||||||||

|

Branches |

||||||||||||

|

||||||||||||

| Book:Thermodynamics | ||||||||||||

| Entropy articles |

|---|

In statistical thermodynamics, entropy (usual symbol S) is a measure of the number of microscopic configurations Ω that correspond to a thermodynamic system in a state specified by certain macroscopic variables. Specifically, assuming that each of the microscopic configurations is equally probable, the entropy of the system is the natural logarithm of that number of configurations, multiplied by the Boltzmann constant kB (which provides consistency with the original thermodynamic concept of entropy discussed below, and gives entropy the dimension of energy divided by temperature). Formally,

For example, gas in a container with known volume, pressure, and temperature could have an enormous number of possible configurations of the individual gas molecules, and which configuration the gas is actually in may be regarded as random. Hence, entropy can be understood as a measure of molecular disorder within a macroscopic system. The second law of thermodynamics states that an isolated system's entropy never decreases. Such systems spontaneously evolve towards thermodynamic equilibrium, the state with maximum entropy. Non-isolated systems may lose entropy, provided their environment's entropy increases by at least that decrement. Since entropy is a state function, the change in entropy of a system is determined by its initial and final states. This applies whether the process is reversible or irreversible. However, irreversible processes increase the combined entropy of the system and its environment.

The change in entropy (ΔS) of a system was originally defined for a thermodynamically reversible process as

- ,

where T is the absolute temperature of the system, dividing an incremental reversible transfer of heat into that system (δQrev). (If heat is transferred out the sign would be reversed giving a decrease in entropy of the system.) The above definition is sometimes called the macroscopic definition of entropy because it can be used without regard to any microscopic description of the contents of a system. The concept of entropy has been found to be generally useful and has several other formulations. Entropy was discovered when it was noticed to be a quantity that behaves as a function of state, as a consequence of the second law of thermodynamics.

Entropy is an extensive property. It has the dimension of energy divided by temperature, which has a unit of joules per kelvin (J K−1) in the International System of Units (or kg m2 s−2 K−1 in terms of base units). But the entropy of a pure substance is usually given as an intensive property—either entropy per unit mass (SI unit: J K−1 kg−1) or entropy per unit amount of substance (SI unit: J K−1 mol−1).

The absolute entropy (S rather than ΔS) was defined later, using either statistical mechanics or the third law of thermodynamics, an otherwise arbitrary additive constant is fixed such that the entropy at absolute zero is zero. In statistical mechanics this reflects that the ground state of a system is generally non-degenerate and only one microscopic configuration corresponds to it.

In the modern microscopic interpretation of entropy in statistical mechanics, entropy is the amount of additional information needed to specify the exact physical state of a system, given its thermodynamic specification. Understanding the role of thermodynamic entropy in various processes requires an understanding of how and why that information changes as the system evolves from its initial to its final condition. It is often said that entropy is an expression of the disorder, or randomness of a system, or of our lack of information about it. The second law is now often seen as an expression of the fundamental postulate of statistical mechanics through the modern definition of entropy.

History

The French mathematician Lazare Carnot proposed in his 1803 paper Fundamental Principles of Equilibrium and Movement that in any machine the accelerations and shocks of the moving parts represent losses of moment of activity. In other words, in any natural process there exists an inherent tendency towards the dissipation of useful energy. Building on this work, in 1824 Lazare's son Sadi Carnot published Reflections on the Motive Power of Fire which posited that in all heat-engines, whenever "caloric" (what is now known as heat) falls through a temperature difference, work or motive power can be produced from the actions of its fall from a hot to cold body. He made the analogy with that of how water falls in a water wheel. This was an early insight into the second law of thermodynamics.[1] Carnot based his views of heat partially on the early 18th century "Newtonian hypothesis" that both heat and light were types of indestructible forms of matter, which are attracted and repelled by other matter, and partially on the contemporary views of Count Rumford who showed (1789) that heat could be created by friction as when cannon bores are machined.[2] Carnot reasoned that if the body of the working substance, such as a body of steam, is returned to its original state at the end of a complete engine cycle, that "no change occurs in the condition of the working body".

The first law of thermodynamics, deduced from the heat-friction experiments of James Joule in 1843, expresses the concept of energy, and its conservation in all processes; the first law, however, is unable to quantify the effects of friction and dissipation.

In the 1850s and 1860s, German physicist Rudolf Clausius objected to the supposition that no change occurs in the working body, and gave this "change" a mathematical interpretation by questioning the nature of the inherent loss of usable heat when work is done, e.g. heat produced by friction.[3] Clausius described entropy as the transformation-content, i.e. dissipative energy use, of a thermodynamic system or working body of chemical species during a change of state.[3] This was in contrast to earlier views, based on the theories of Isaac Newton, that heat was an indestructible particle that had mass.

Later, scientists such as Ludwig Boltzmann, Josiah Willard Gibbs, and James Clerk Maxwell gave entropy a statistical basis. In 1877 Boltzmann visualized a probabilistic way to measure the entropy of an ensemble of ideal gas particles, in which he defined entropy to be proportional to the logarithm of the number of microstates such a gas could occupy. Henceforth, the essential problem in statistical thermodynamics, i.e. according to Erwin Schrödinger, has been to determine the distribution of a given amount of energy E over N identical systems. Carathéodory linked entropy with a mathematical definition of irreversibility, in terms of trajectories and integrability.

Definitions and descriptions

Any method involving the notion of entropy, the very existence of which depends on the second law of thermodynamics, will doubtless seem to many far-fetched, and may repel beginners as obscure and difficult of comprehension.

Willard Gibbs, Graphical Methods in the Thermodynamics of Fluids[4]

There are two related definitions of entropy: the thermodynamic definition and the statistical mechanics definition. Historically, the classical thermodynamics definition developed first. In the classical thermodynamics viewpoint, the system is composed of very large numbers of constituents (atoms, molecules) and the state of the system is described by the average thermodynamic properties of those constituents; the details of the system's constituents are not directly considered, but their behavior is described by macroscopically averaged properties, e.g. temperature, pressure, entropy, heat capacity. The early classical definition of the properties of the system assumed equilibrium. The classical thermodynamic definition of entropy has more recently been extended into the area of non-equilibrium thermodynamics. Later, the thermodynamic properties, including entropy, were given an alternative definition in terms of the statistics of the motions of the microscopic constituents of a system — modeled at first classically, e.g. Newtonian particles constituting a gas, and later quantum-mechanically (photons, phonons, spins, etc.). The statistical mechanics description of the behavior of a system is necessary as the definition of the properties of a system using classical thermodynamics become an increasingly unreliable method of predicting the final state of a system that is subject to some process.

Function of state

There are many thermodynamic properties that are functions of state. This means that at a particular thermodynamic state (which should not be confused with the microscopic state of a system), these properties have a certain value. Often, if two properties of the system are determined, then the state is determined and the other properties' values can also be determined. For instance, a quantity of gas at a particular temperature and pressure has its state fixed by those values and thus has a specific volume that is determined by those values. As another instance, a system composed of a pure substance of a single phase at a particular uniform temperature and pressure is determined (and is thus a particular state) and is at not only a particular volume but also at a particular entropy.[5] The fact that entropy is a function of state is one reason it is useful. In the Carnot cycle, the working fluid returns to the same state it had at the start of the cycle, hence the line integral of any state function, such as entropy, over this reversible cycle is zero.

Reversible process

Entropy is defined for a reversible process and for a system that, at all times, can be treated as being at a uniform state and thus at a uniform temperature. Reversibility is an ideal that some real processes approximate and that is often presented in study exercises. For a reversible process, entropy behaves as a conserved quantity and no change occurs in total entropy. More specifically, total entropy is conserved in a reversible process and not conserved in an irreversible process.[6] One has to be careful about system boundaries. For example, in the Carnot cycle, while the heat flow from the hot reservoir to the cold reservoir represents an increase in entropy, the work output, if reversibly and perfectly stored in some energy storage mechanism, represents a decrease in entropy that could be used to operate the heat engine in reverse and return to the previous state, thus the total entropy change is still zero at all times if the entire process is reversible. Any process that does not meet the requirements of a reversible process must be treated as an irreversible process, which is usually a complex task. An irreversible process increases entropy.[7]

Heat transfer situations require two or more non-isolated systems in thermal contact. In irreversible heat transfer, heat energy is irreversibly transferred from the higher temperature system to the lower temperature system, and the combined entropy of the systems increases. Each system, by definition, must have its own absolute temperature applicable within all areas in each respective system in order to calculate the entropy transfer. Thus, when a system at higher temperature TH transfers heat dQ to a system of lower temperature TC, the former loses entropy dQ/TH and the latter gains entropy dQ/TC. Since TH > TC, it follows that dQ/TH < dQ/TC, whence there is a net gain in the combined entropy.

Carnot cycle

The concept of entropy arose from Rudolf Clausius's study of the Carnot cycle.[8] In a Carnot cycle, heat QH is absorbed isothermally at temperature TH from a 'hot' reservoir and given up isothermally as heat QC to a 'cold' reservoir at TC. According to Carnot's principle, work can only be produced by the system when there is a temperature difference, and the work should be some function of the difference in temperature and the heat absorbed (QH). Carnot did not distinguish between QH and QC, since he was using the incorrect hypothesis that caloric theory was valid, and hence heat was conserved (the incorrect assumption that QH and QC were equal) when, in fact, QH is greater than QC.[9][10] Through the efforts of Clausius and Kelvin, it is now known that the maximum work that a system can produce is the product of the Carnot efficiency and the heat absorbed from the hot reservoir:

-

(1)

To derive the Carnot efficiency, which is 1-(TC/TH) (a number less than one), Kelvin had to evaluate the ratio of the work output to the heat absorbed during the isothermal expansion with the help of the Carnot-Clapeyron equation which contained an unknown function, known as the Carnot function. The possibility that the Carnot function could be the temperature as measured from a zero temperature, was suggested by Joule in a letter to Kelvin. This allowed Kelvin to establish his absolute temperature scale.[11] It is also known that the work produced by the system is the difference between the heat absorbed from the hot reservoir and the heat given up to the cold reservoir:

-

(2)

Since the latter is valid over the entire cycle, this gave Clausius the hint that at each stage of the cycle, work and heat would not be equal, but rather their difference would be a state function that would vanish upon completion of the cycle. The state function was called the internal energy and it became the first law of thermodynamics.[12]

Now equating (1) and (2) gives

or

This implies that there is a function of state which is conserved over a complete cycle of the Carnot cycle. Clausius called this state function entropy. One can see that entropy was discovered through mathematics rather than through laboratory results. It is a mathematical construct and has no easy physical analogy. This makes the concept somewhat obscure or abstract, akin to how the concept of energy arose.

Clausius then asked what would happen if there should be less work produced by the system than that predicted by Carnot's principle. The right-hand side of the first equation would be the upper bound of the work output by the system, which would now be converted into an inequality

When the second equation is used to express the work as a difference in heats, we get

- or

So more heat is given up to the cold reservoir than in the Carnot cycle. If we denote the entropies by Si=Qi/Ti for the two states, then the above inequality can be written as a decrease in the entropy

- or

The entropy that leaves the system is greater than the entropy that enters the system, implying that some irreversible process prevents the cycle from producing the maximum amount of work predicted by the Carnot equation.

The Carnot cycle and efficiency are useful because they define the upper bound of the possible work output and the efficiency of any classical thermodynamic system. Other cycles, such as the Otto cycle, Diesel cycle and Brayton cycle, can be analyzed from the standpoint of the Carnot cycle. Any machine or process that is claimed to produce an efficiency greater than the Carnot efficiency is not viable because it violates the second law of thermodynamics. For very small numbers of particles in the system, statistical thermodynamics must be used. The efficiency of devices such as photovoltaic cells require an analysis from the standpoint of quantum mechanics.

Classical thermodynamics

| Conjugate variables of thermodynamics | |

|---|---|

| Pressure | Volume |

| (Stress) | (Strain) |

| Temperature | Entropy |

| Chemical potential | Particle number |

The thermodynamic definition of entropy was developed in the early 1850s by Rudolf Clausius and essentially describes how to measure the entropy of an isolated system in thermodynamic equilibrium with its parts. Clausius created the term entropy as an extensive thermodynamic variable that was shown to be useful in characterizing the Carnot cycle. Heat transfer along the isotherm steps of the Carnot cycle was found to be proportional to the temperature of a system (known as its absolute temperature). This relationship was expressed in increments of entropy equal to the ratio of incremental heat transfer divided by temperature, which was found to vary in the thermodynamic cycle but eventually return to the same value at the end of every cycle. Thus it was found to be a function of state, specifically a thermodynamic state of the system. Clausius wrote that he "intentionally formed the word Entropy as similar as possible to the word Energy", basing the term on the Greek ἡ τροπή tropē, "transformation".[13][note 1]

While Clausius based his definition on a reversible process, there are also irreversible processes that change entropy. Following the second law of thermodynamics, entropy of an isolated system always increases. The difference between an isolated system and closed system is that heat may not flow to and from an isolated system, but heat flow to and from a closed system is possible. Nevertheless, for both closed and isolated systems, and indeed, also in open systems, irreversible thermodynamics processes may occur.

According to the Clausius equality, for a reversible cyclic process: This means the line integral is path-independent.

So we can define a state function S called entropy, which satisfies

To find the entropy difference between any two states of a system, the integral must be evaluated for some reversible path between the initial and final states.[14] Since entropy is a state function, the entropy change of the system for an irreversible path is the same as for a reversible path between the same two states.[15] However, the entropy change of the surroundings will be different.

We can only obtain the change of entropy by integrating the above formula. To obtain the absolute value of the entropy, we need the third law of thermodynamics, which states that S = 0 at absolute zero for perfect crystals.

From a macroscopic perspective, in classical thermodynamics the entropy is interpreted as a state function of a thermodynamic system: that is, a property depending only on the current state of the system, independent of how that state came to be achieved. In any process where the system gives up energy ΔE, and its entropy falls by ΔS, a quantity at least TR ΔS of that energy must be given up to the system's surroundings as unusable heat (TR is the temperature of the system's external surroundings). Otherwise the process cannot go forward. In classical thermodynamics, the entropy of a system is defined only if it is in thermodynamic equilibrium.

Statistical mechanics

The statistical definition was developed by Ludwig Boltzmann in the 1870s by analyzing the statistical behavior of the microscopic components of the system. Boltzmann showed that this definition of entropy was equivalent to the thermodynamic entropy to within a constant number which has since been known as Boltzmann's constant. In summary, the thermodynamic definition of entropy provides the experimental definition of entropy, while the statistical definition of entropy extends the concept, providing an explanation and a deeper understanding of its nature.

The interpretation of entropy in statistical mechanics is the measure of uncertainty, or mixedupness in the phrase of Gibbs, which remains about a system after its observable macroscopic properties, such as temperature, pressure and volume, have been taken into account. For a given set of macroscopic variables, the entropy measures the degree to which the probability of the system is spread out over different possible microstates. In contrast to the macrostate, which characterizes plainly observable average quantities, a microstate specifies all molecular details about the system including the position and velocity of every molecule. The more such states available to the system with appreciable probability, the greater the entropy. In statistical mechanics, entropy is a measure of the number of ways in which a system may be arranged, often taken to be a measure of "disorder" (the higher the entropy, the higher the disorder).[16][17][18] This definition describes the entropy as being proportional to the natural logarithm of the number of possible microscopic configurations of the individual atoms and molecules of the system (microstates) which could give rise to the observed macroscopic state (macrostate) of the system. The constant of proportionality is the Boltzmann constant.

Specifically, entropy is a logarithmic measure of the number of states with significant probability of being occupied:

or, equivalently, the expected value of the logarithm of the probability that a microstate will be occupied

where kB is the Boltzmann constant, equal to 1.38065×10−23 J/K. The summation is over all the possible microstates of the system, and pi is the probability that the system is in the i-th microstate.[19] This definition assumes that the basis set of states has been picked so that there is no information on their relative phases. In a different basis set, the more general expression is

where is the density matrix, is trace (linear algebra) and is the matrix logarithm. This density matrix formulation is not needed in cases of thermal equilibrium so long as the basis states are chosen to be energy eigenstates. For most practical purposes, this can be taken as the fundamental definition of entropy since all other formulas for S can be mathematically derived from it, but not vice versa.

In what has been called the fundamental assumption of statistical thermodynamics or the fundamental postulate in statistical mechanics, the occupation of any microstate is assumed to be equally probable (i.e. Pi = 1/Ω, where Ω is the number of microstates); this assumption is usually justified for an isolated system in equilibrium.[20] Then the previous equation reduces to

In thermodynamics, such a system is one in which the volume, number of molecules, and internal energy are fixed (the microcanonical ensemble).

The most general interpretation of entropy is as a measure of our uncertainty about a system. The equilibrium state of a system maximizes the entropy because we have lost all information about the initial conditions except for the conserved variables; maximizing the entropy maximizes our ignorance about the details of the system.[21] This uncertainty is not of the everyday subjective kind, but rather the uncertainty inherent to the experimental method and interpretative model.

The interpretative model has a central role in determining entropy. The qualifier "for a given set of macroscopic variables" above has deep implications: if two observers use different sets of macroscopic variables, they see different entropies. For example, if observer A uses the variables U, V and W, and observer B uses U, V, W, X, then, by changing X, observer B can cause an effect that looks like a violation of the second law of thermodynamics to observer A. In other words: the set of macroscopic variables one chooses must include everything that may change in the experiment, otherwise one might see decreasing entropy![22]

Entropy can be defined for any Markov processes with reversible dynamics and the detailed balance property.

In Boltzmann's 1896 Lectures on Gas Theory, he showed that this expression gives a measure of entropy for systems of atoms and molecules in the gas phase, thus providing a measure for the entropy of classical thermodynamics.

Entropy of a system

Entropy is the above mentioned unexpected and, to some, obscure integral that arises directly from the Carnot cycle. It is reversible heat divided by temperature. It is also, remarkably, a fundamental and very useful function of state.

In a thermodynamic system, pressure, density, and temperature tend to become uniform over time because this equilibrium state has higher probability (more possible combinations of microstates) than any other; see statistical mechanics. As an example, for a glass of ice water in air at room temperature, the difference in temperature between a warm room (the surroundings) and cold glass of ice and water (the system and not part of the room), begins to be equalized as portions of the thermal energy from the warm surroundings spread to the cooler system of ice and water. Over time the temperature of the glass and its contents and the temperature of the room become equal. The entropy of the room has decreased as some of its energy has been dispersed to the ice and water. However, as calculated in the example, the entropy of the system of ice and water has increased more than the entropy of the surrounding room has decreased. In an isolated system such as the room and ice water taken together, the dispersal of energy from warmer to cooler always results in a net increase in entropy. Thus, when the "universe" of the room and ice water system has reached a temperature equilibrium, the entropy change from the initial state is at a maximum. The entropy of the thermodynamic system is a measure of how far the equalization has progressed.

Thermodynamic entropy is a non-conserved state function that is of great importance in the sciences of physics and chemistry.[16][23] Historically, the concept of entropy evolved to explain why some processes (permitted by conservation laws) occur spontaneously while their time reversals (also permitted by conservation laws) do not; systems tend to progress in the direction of increasing entropy.[24][25] For isolated systems, entropy never decreases.[23] This fact has several important consequences in science: first, it prohibits "perpetual motion" machines; and second, it implies the arrow of entropy has the same direction as the arrow of time. Increases in entropy correspond to irreversible changes in a system, because some energy is expended as waste heat, limiting the amount of work a system can do.[16][17][26][27]

Unlike many other functions of state, entropy cannot be directly observed but must be calculated. Entropy can be calculated for a substance as the standard molar entropy from absolute zero (also known as absolute entropy) or as a difference in entropy from some other reference state which is defined as zero entropy. Entropy has the dimension of energy divided by temperature, which has a unit of joules per kelvin (J/K) in the International System of Units. While these are the same units as heat capacity, the two concepts are distinct.[28] Entropy is not a conserved quantity: for example, in an isolated system with non-uniform temperature, heat might irreversibly flow and the temperature become more uniform such that entropy increases. The second law of thermodynamics states that a closed system has entropy which may increase or otherwise remain constant. Chemical reactions cause changes in entropy and entropy plays an important role in determining in which direction a chemical reaction spontaneously proceeds.

One dictionary definition of entropy is that it is "a measure of thermal energy per unit temperature that is not available for useful work". For instance, a substance at uniform temperature is at maximum entropy and cannot drive a heat engine. A substance at non-uniform temperature is at a lower entropy (than if the heat distribution is allowed to even out) and some of the thermal energy can drive a heat engine.

A special case of entropy increase, the entropy of mixing, occurs when two or more different substances are mixed. If the substances are at the same temperature and pressure, there is no net exchange of heat or work – the entropy change is entirely due to the mixing of the different substances. At a statistical mechanical level, this results due to the change in available volume per particle with mixing.[29]

Second law of thermodynamics

The second law of thermodynamics requires that, in general, the total entropy of any system can't decrease other than by increasing the entropy of some other system. Hence, in a system isolated from its environment, the entropy of that system tends not to decrease. It follows that heat can't flow from a colder body to a hotter body without the application of work (the imposition of order) to the colder body. Secondly, it is impossible for any device operating on a cycle to produce net work from a single temperature reservoir; the production of net work requires flow of heat from a hotter reservoir to a colder reservoir, or a single expanding reservoir undergoing adiabatic cooling, which performs adiabatic work. As a result, there is no possibility of a perpetual motion system. It follows that a reduction in the increase of entropy in a specified process, such as a chemical reaction, means that it is energetically more efficient.

It follows from the second law of thermodynamics that the entropy of a system that is not isolated may decrease. An air conditioner, for example, may cool the air in a room, thus reducing the entropy of the air of that system. The heat expelled from the room (the system), which the air conditioner transports and discharges to the outside air, always makes a bigger contribution to the entropy of the environment than the decrease of the entropy of the air of that system. Thus, the total of entropy of the room plus the entropy of the environment increases, in agreement with the second law of thermodynamics.

In mechanics, the second law in conjunction with the fundamental thermodynamic relation places limits on a system's ability to do useful work.[30] The entropy change of a system at temperature T absorbing an infinitesimal amount of heat δq in a reversible way, is given by δq/T. More explicitly, an energy TR S is not available to do useful work, where TR is the temperature of the coldest accessible reservoir or heat sink external to the system. For further discussion, see Exergy.

Statistical mechanics demonstrates that entropy is governed by probability, thus allowing for a decrease in disorder even in an isolated system. Although this is possible, such an event has a small probability of occurring, making it unlikely.[31]

Applications

The fundamental thermodynamic relation

The entropy of a system depends on its internal energy and its external parameters, such as its volume. In the thermodynamic limit, this fact leads to an equation relating the change in the internal energy U to changes in the entropy and the external parameters. This relation is known as the fundamental thermodynamic relation. If external pressure P bears on the volume V as the only external parameter, this relation is:

Since both internal energy and entropy are monotonic functions of temperature T, implying that the internal energy is fixed when one specifies the entropy and the volume, this relation is valid even if the change from one state of thermal equilibrium to another with infinitesimally larger entropy and volume happens in a non-quasistatic way (so during this change the system may be very far out of thermal equilibrium and then the entropy, pressure and temperature may not exist).

The fundamental thermodynamic relation implies many thermodynamic identities that are valid in general, independent of the microscopic details of the system. Important examples are the Maxwell relations and the relations between heat capacities.

Entropy in chemical thermodynamics

Thermodynamic entropy is central in chemical thermodynamics, enabling changes to be quantified and the outcome of reactions predicted. The second law of thermodynamics states that entropy in an isolated system – the combination of a subsystem under study and its surroundings – increases during all spontaneous chemical and physical processes. The Clausius equation of δqrev/T = ΔS introduces the measurement of entropy change, ΔS. Entropy change describes the direction and quantifies the magnitude of simple changes such as heat transfer between systems – always from hotter to cooler spontaneously.

The thermodynamic entropy therefore has the dimension of energy divided by temperature, and the unit joule per kelvin (J/K) in the International System of Units (SI).

Thermodynamic entropy is an extensive property, meaning that it scales with the size or extent of a system. In many processes it is useful to specify the entropy as an intensive property independent of the size, as a specific entropy characteristic of the type of system studied. Specific entropy may be expressed relative to a unit of mass, typically the kilogram (unit: Jkg−1K−1). Alternatively, in chemistry, it is also referred to one mole of substance, in which case it is called the molar entropy with a unit of Jmol−1K−1.

Thus, when one mole of substance at about 0K is warmed by its surroundings to 298K, the sum of the incremental values of qrev/T constitute each element's or compound's standard molar entropy, an indicator of the amount of energy stored by a substance at 298K.[32][33] Entropy change also measures the mixing of substances as a summation of their relative quantities in the final mixture.[34]

Entropy is equally essential in predicting the extent and direction of complex chemical reactions. For such applications, ΔS must be incorporated in an expression that includes both the system and its surroundings, ΔSuniverse = ΔSsurroundings + ΔS system. This expression becomes, via some steps, the Gibbs free energy equation for reactants and products in the system: ΔG [the Gibbs free energy change of the system] = ΔH [the enthalpy change] −T ΔS [the entropy change].[32]

Entropy balance equation for open systems

In chemical engineering, the principles of thermodynamics are commonly applied to "open systems", i.e. those in which heat, work, and mass flow across the system boundary. Flows of both heat () and work, i.e. (shaft work) and P(dV/dt) (pressure-volume work), across the system boundaries, in general cause changes in the entropy of the system. Transfer as heat entails entropy transfer where T is the absolute thermodynamic temperature of the system at the point of the heat flow. If there are mass flows across the system boundaries, they also influence the total entropy of the system. This account, in terms of heat and work, is valid only for cases in which the work and heat transfers are by paths physically distinct from the paths of entry and exit of matter from the system.[35][36]

To derive a generalized entropy balanced equation, we start with the general balance equation for the change in any extensive quantity Θ in a thermodynamic system, a quantity that may be either conserved, such as energy, or non-conserved, such as entropy. The basic generic balance expression states that dΘ/dt, i.e. the rate of change of Θ in the system, equals the rate at which Θ enters the system at the boundaries, minus the rate at which Θ leaves the system across the system boundaries, plus the rate at which Θ is generated within the system. For an open thermodynamic system in which heat and work are transferred by paths separate from the paths for transfer of matter, using this generic balance equation, with respect to the rate of change with time t of the extensive quantity entropy S, the entropy balance equation is:[37][note 2]

where

- = the net rate of entropy flow due to the flows of mass into and out of the system (where = entropy per unit mass).

- = the rate of entropy flow due to the flow of heat across the system boundary.

- = the rate of entropy production within the system. This entropy production arises from processes within the system, including chemical reactions, internal matter diffusion, internal heat transfer, and frictional effects such as viscosity occurring within the system from mechanical work transfer to or from the system.

Note, also, that if there are multiple heat flows, the term is replaced by where is the heat flow and is the temperature at the jth heat flow port into the system.

Entropy change formulas for simple processes

For certain simple transformations in systems of constant composition, the entropy changes are given by simple formulas.[38]

Isothermal expansion or compression of an ideal gas

For the expansion (or compression) of an ideal gas from an initial volume and pressure to a final volume and pressure at any constant temperature, the change in entropy is given by:

Here is the number of moles of gas and is the ideal gas constant. These equations also apply for expansion into a finite vacuum or a throttling process, where the temperature, internal energy and enthalpy for an ideal gas remain constant.

Cooling and heating

For heating or cooling of any system (gas, liquid or solid) at constant pressure from an initial temperature to a final temperature , the entropy change is

- .

provided that the constant-pressure molar heat capacity (or specific heat) CP is constant and that no phase transition occurs in this temperature interval.

Similarly at constant volume, the entropy change is

- ,

where the constant-volume heat capacity Cv is constant and there is no phase change.

At low temperatures near absolute zero, heat capacities of solids quickly drop off to near zero, so the assumption of constant heat capacity does not apply.[39]

Since entropy is a state function, the entropy change of any process in which temperature and volume both vary is the same as for a path divided into two steps - heating at constant volume and expansion at constant temperature. For an ideal gas, the total entropy change is[40]

- .

Similarly if the temperature and pressure of an ideal gas both vary,

- .

Phase transitions

Reversible phase transitions occur at constant temperature and pressure. The reversible heat is the enthalpy change for the transition, and the entropy change is the enthalpy change divided by the thermodynamic temperature. For fusion (melting) of a solid to a liquid at the melting point Tm, the entropy of fusion is

Similarly, for vaporization of a liquid to a gas at the boiling point Tb, the entropy of vaporization is

Approaches to understanding entropy

As a fundamental aspect of thermodynamics and physics, several different approaches to entropy beyond that of Clausius and Boltzmann are valid.

Standard textbook definitions

The following is a list of additional definitions of entropy from a collection of textbooks:

- a measure of energy dispersal at a specific temperature.

- a measure of disorder in the universe or of the availability of the energy in a system to do work.[41]

- a measure of a system's thermal energy per unit temperature that is unavailable for doing useful work.[42]

In Boltzmann's definition, entropy is a measure of the number of possible microscopic states (or microstates) of a system in thermodynamic equilibrium. Consistent with the Boltzmann definition, the second law of thermodynamics needs to be re-worded as such that entropy increases over time, though the underlying principle remains the same.

Order and disorder

Entropy has often been loosely associated with the amount of order or disorder, or of chaos, in a thermodynamic system. The traditional qualitative description of entropy is that it refers to changes in the status quo of the system and is a measure of "molecular disorder" and the amount of wasted energy in a dynamical energy transformation from one state or form to another. In this direction, several recent authors have derived exact entropy formulas to account for and measure disorder and order in atomic and molecular assemblies.[43][44][45] One of the simpler entropy order/disorder formulas is that derived in 1984 by thermodynamic physicist Peter Landsberg, based on a combination of thermodynamics and information theory arguments. He argues that when constraints operate on a system, such that it is prevented from entering one or more of its possible or permitted states, as contrasted with its forbidden states, the measure of the total amount of "disorder" in the system is given by:[44][45]

Similarly, the total amount of "order" in the system is given by:

In which CD is the "disorder" capacity of the system, which is the entropy of the parts contained in the permitted ensemble, CI is the "information" capacity of the system, an expression similar to Shannon's channel capacity, and CO is the "order" capacity of the system.[43]

Energy dispersal

The concept of entropy can be described qualitatively as a measure of energy dispersal at a specific temperature.[46] Similar terms have been in use from early in the history of classical thermodynamics, and with the development of statistical thermodynamics and quantum theory, entropy changes have been described in terms of the mixing or "spreading" of the total energy of each constituent of a system over its particular quantized energy levels.

Ambiguities in the terms disorder and chaos, which usually have meanings directly opposed to equilibrium, contribute to widespread confusion and hamper comprehension of entropy for most students.[47] As the second law of thermodynamics shows, in an isolated system internal portions at different temperatures tend to adjust to a single uniform temperature and thus produce equilibrium. A recently developed educational approach avoids ambiguous terms and describes such spreading out of energy as dispersal, which leads to loss of the differentials required for work even though the total energy remains constant in accordance with the first law of thermodynamics[48] (compare discussion in next section). Physical chemist Peter Atkins, for example, who previously wrote of dispersal leading to a disordered state, now writes that "spontaneous changes are always accompanied by a dispersal of energy".[49]

Relating entropy to energy usefulness

Following on from the above, it is possible (in a thermal context) to regard entropy as an indicator or measure of the effectiveness or usefulness of a particular quantity of energy.[50] This is because energy supplied at a high temperature (i.e. with low entropy) tends to be more useful than the same amount of energy available at room temperature. Mixing a hot parcel of a fluid with a cold one produces a parcel of intermediate temperature, in which the overall increase in entropy represents a "loss" which can never be replaced.

Thus, the fact that the entropy of the universe is steadily increasing, means that its total energy is becoming less useful: eventually, this will lead to the "heat death of the Universe".[51]

Entropy and adiabatic accessibility

A definition of entropy based entirely on the relation of adiabatic accessibility between equilibrium states was given by E.H.Lieb and J. Yngvason in 1999.[52] This approach has several predecessors, including the pioneering work of Constantin Carathéodory from 1909[53] and the monograph by R. Giles.[54] In the setting of Lieb and Yngvason one starts by picking, for a unit amount of the substance under consideration, two reference states and such that the latter is adiabatically accessible from the former but not vice versa. Defining the entropies of the reference states to be 0 and 1 respectively the entropy of a state is defined as the largest number such that is adiabatically accessible from a composite state consisting of an amount in the state and a complementary amount, , in the state . A simple but important result within this setting is that entropy is uniquely determined, apart from a choice of unit and an additive constant for each chemical element, by the following properties: It is monotonic with respect to the relation of adiabatic accessibility, additive on composite systems, and extensive under scaling.

Entropy in quantum mechanics

In quantum statistical mechanics, the concept of entropy was developed by John von Neumann and is generally referred to as "von Neumann entropy",

where ρ is the density matrix and Tr is the trace operator.

This upholds the correspondence principle, because in the classical limit, when the phases between the basis states used for the classical probabilities are purely random, this expression is equivalent to the familiar classical definition of entropy,

- ,

i.e. in such a basis the density matrix is diagonal.

Von Neumann established a rigorous mathematical framework for quantum mechanics with his work Mathematische Grundlagen der Quantenmechanik. He provided in this work a theory of measurement, where the usual notion of wave function collapse is described as an irreversible process (the so-called von Neumann or projective measurement). Using this concept, in conjunction with the density matrix he extended the classical concept of entropy into the quantum domain.

Information theory

I thought of calling it "information", but the word was overly used, so I decided to call it "uncertainty". [...] Von Neumann told me, "You should call it entropy, for two reasons. In the first place your uncertainty function has been used in statistical mechanics under that name, so it already has a name. In the second place, and more important, nobody knows what entropy really is, so in a debate you will always have the advantage."

Conversation between Claude Shannon and John von Neumann regarding what name to give to the attenuation in phone-line signals[55]

When viewed in terms of information theory, the entropy state function is simply the amount of information (in the Shannon sense) that would be needed to specify the full microstate of the system. This is left unspecified by the macroscopic description.

In information theory, entropy is the measure of the amount of information that is missing before reception and is sometimes referred to as Shannon entropy.[56] Shannon entropy is a broad and general concept which finds applications in information theory as well as thermodynamics. It was originally devised by Claude Shannon in 1948 to study the amount of information in a transmitted message. The definition of the information entropy is, however, quite general, and is expressed in terms of a discrete set of probabilities pi so that

In the case of transmitted messages, these probabilities were the probabilities that a particular message was actually transmitted, and the entropy of the message system was a measure of the average amount of information in a message. For the case of equal probabilities (i.e. each message is equally probable), the Shannon entropy (in bits) is just the number of yes/no questions needed to determine the content of the message.[19]

The question of the link between information entropy and thermodynamic entropy is a debated topic. While most authors argue that there is a link between the two,[57][58][59][60][61] a few argue that they have nothing to do with each other.[19]

The expressions for the two entropies are similar. If W is the number of microstates that can yield a given macrostate, and each microstate has the same a priori probability, then that probability is p=1/W. The Shannon entropy (in nats) is:

and if entropy is measured in units of k per nat, then the entropy is given[62] by:

which is the famous Boltzmann entropy formula when k is Boltzmann's constant, which may be interpreted as the thermodynamic entropy per nat. There are many ways of demonstrating the equivalence of "information entropy" and "physics entropy", that is, the equivalence of "Shannon entropy" and "Boltzmann entropy". Nevertheless, some authors argue for dropping the word entropy for the H function of information theory and using Shannon's other term "uncertainty" instead.[63]

Interdisciplinary applications of entropy

Although the concept of entropy was originally a thermodynamic construct, it has been adapted in other fields of study, including information theory, psychodynamics, thermoeconomics/ecological economics, and evolution.[64][65][66][67][68] For instance, an entropic argument has been recently proposed for explaining the preference of cave spiders in choosing a suitable area for laying their eggs.[69]

Thermodynamic and statistical mechanics concepts

- Entropy unit – a non-S.I. unit of thermodynamic entropy, usually denoted "e.u." and equal to one calorie per Kelvin per mole, or 4.184 Joules per Kelvin per mole.[70]

- Gibbs entropy – the usual statistical mechanical entropy of a thermodynamic system.

- Boltzmann entropy – a type of Gibbs entropy, which neglects internal statistical correlations in the overall particle distribution.

- Tsallis entropy – a generalization of the standard Boltzmann-Gibbs entropy.

- Standard molar entropy – is the entropy content of one mole of substance, under conditions of standard temperature and pressure.

- Residual entropy – the entropy present after a substance is cooled arbitrarily close to absolute zero.

- Entropy of mixing – the change in the entropy when two different chemical substances or components are mixed.

- Loop entropy – is the entropy lost upon bringing together two residues of a polymer within a prescribed distance.

- Conformational entropy – is the entropy associated with the physical arrangement of a polymer chain that assumes a compact or globular state in solution.

- Entropic force – a microscopic force or reaction tendency related to system organization changes, molecular frictional considerations, and statistical variations.

- Free entropy – an entropic thermodynamic potential analogous to the free energy.

- Entropic explosion – an explosion in which the reactants undergo a large change in volume without releasing a large amount of heat.

- Entropy change – a change in entropy dS between two equilibrium states is given by the heat transferred dQrev divided by the absolute temperature T of the system in this interval.

- Sackur-Tetrode entropy – the entropy of a monatomic classical ideal gas determined via quantum considerations.

The arrow of time

Entropy is the only quantity in the physical sciences that seems to imply a particular direction of progress, sometimes called an arrow of time. As time progresses, the second law of thermodynamics states that the entropy of an isolated system never decreases. Hence, from this perspective, entropy measurement is thought of as a kind of clock.

Cosmology

Since a finite universe is an isolated system, the Second Law of Thermodynamics states that its total entropy is constantly increasing. It has been speculated, since the 19th century, that the universe is fated to a heat death in which all the energy ends up as a homogeneous distribution of thermal energy, so that no more work can be extracted from any source.

If the universe can be considered to have generally increasing entropy, then – as Sir Roger Penrose has pointed out – gravity plays an important role in the increase because gravity causes dispersed matter to accumulate into stars, which collapse eventually into black holes. The entropy of a black hole is proportional to the surface area of the black hole's event horizon.[71] Jacob Bekenstein and Stephen Hawking have shown that black holes have the maximum possible entropy of any object of equal size. This makes them likely end points of all entropy-increasing processes, if they are totally effective matter and energy traps. However, the escape of energy from black holes might be possible due to quantum activity, see Hawking radiation. In 2014 Hawking changed his stance on some details, in a paper which largely redefined the event horizons of black holes, positing that black holes do not exist.[72]

The role of entropy in cosmology remains a controversial subject since the time of Ludwig Boltzmann. Recent work has cast some doubt on the heat death hypothesis and the applicability of any simple thermodynamic model to the universe in general. Although entropy does increase in the model of an expanding universe, the maximum possible entropy rises much more rapidly, moving the universe further from the heat death with time, not closer.[73][74][75] This results in an "entropy gap" pushing the system further away from the posited heat death equilibrium.[76] Other complicating factors, such as the energy density of the vacuum and macroscopic quantum effects, are difficult to reconcile with thermodynamical models, making any predictions of large-scale thermodynamics extremely difficult.[77]

The entropy gap is widely believed to have been originally opened up by the early rapid exponential expansion of the universe.

Economics

Romanian American economist Nicholas Georgescu-Roegen, a progenitor in economics and a paradigm founder of ecological economics, made extensive use of the entropy concept in his magnum opus on The Entropy Law and the Economic Process.[78] Due to Georgescu-Roegen's work, the laws of thermodynamics now form an integral part of the ecological economics school.[79]:204f [80]:29–35 Although his work was blemished somewhat by mistakes, a full chapter on the economics of Georgescu-Roegen has approvingly been included in one elementary physics textbook on the historical development of thermodynamics.[81]:95–112

In economics, Georgescu-Roegen's work has generated the term 'entropy pessimism'.[82]:116 Since the 1990s, leading ecological economist and steady-state theorist Herman Daly — a student of Georgescu-Roegen — has been the economics profession's most influential proponent of the entropy pessimism position.[83]:545f

See also

- Autocatalytic reactions and order creation

- Brownian ratchet

- Clausius–Duhem inequality

- Configuration entropy

- Departure function

- Enthalpy

- Entropic force

- Entropy (information theory)

- Entropy (computing)

- Entropy and life

- Entropy (order and disorder)

- Entropy rate

- Geometrical frustration

- Harmonic entropy

- Laws of thermodynamics

- Multiplicity function

- Negentropy (negative entropy)

- Orders of magnitude (entropy)

- Stirling's formula

- Thermodynamic databases for pure substances

- Thermodynamic potential

- Wavelet entropy

- Phase space

Notes

References

- ↑ "Carnot, Sadi (1796–1832)". Wolfram Research. 2007. Retrieved 2010-02-24.

- ↑ McCulloch, Richard, S. (1876). Treatise on the Mechanical Theory of Heat and its Applications to the Steam-Engine, etc. D. Van Nostrand.

- 1 2 Clausius, Rudolf (1850). On the Motive Power of Heat, and on the Laws which can be deduced from it for the Theory of Heat. Poggendorff's Annalen der Physick, LXXIX (Dover Reprint). ISBN 0-486-59065-8.

- ↑ The scientific papers of J. Willard Gibbs in Two Volumes. 1. Longmans, Green, and Co. 1906. p. 11. Retrieved 2011-02-26.

- ↑ J. A. McGovern,"2.5 Entropy". Archived from the original on 2012-09-23. Retrieved 2013-02-05.

- ↑ "6.5 Irreversibility, Entropy Changes, and ``Lost Work". web.mit.edu. Retrieved 21 May 2016.

- ↑ Lower, Stephen. "What is entropy?". www.chem1.com. Retrieved 21 May 2016.

- ↑ Lavenda, Bernard H. (2010). "2.3.4". A new perspective on thermodynamics (Online-Ausg. ed.). New York: Springer. ISBN 978-1-4419-1430-9.

- ↑ Carnot, Sadi Carnot (1986). Fox, Robert, ed. Reflexions on the motive power of fire. New York, NY: Lilian Barber Press. p. 26. ISBN 9780936508160.

- ↑ Truesdell, C. (1980). The tragicomical history of thermodynamics 1822-1854. New York: Springer. pp. 78–85. ISBN 9780387904030.

- ↑ Clerk Maxwel, James (2001). Pesic, Peter, ed. Theory of heat. Mineola: Dover Publications. pp. 115–158. ISBN 9780486417356.

- ↑ Rudolf Clausius (1867). The Mechanical Theory of Heat: With Its Applications to the Steam-engine and to the Physical Properties of Bodies. J. Van Voorst. p. 28. ISBN 9781498167338.

- ↑ Clausius, Rudolf (1865). Ueber verschiedene für die Anwendung bequeme Formen der Hauptgleichungen der mechanischen Wärmetheorie: vorgetragen in der naturforsch. Gesellschaft den 24. April 1865. p. 46.

- ↑ Atkins, Peter; Julio De Paula (2006). Physical Chemistry, 8th ed. Oxford University Press. p. 79. ISBN 0-19-870072-5.

- ↑ Engel, Thomas; Philip Reid (2006). Physical Chemistry. Pearson Benjamin Cummings. p. 86. ISBN 0-8053-3842-X.

- 1 2 3 Licker, Mark D. (2004). McGraw-Hill concise encyclopedia of chemistry. New York: McGraw-Hill Professional. ISBN 9780071439534.

- 1 2 Sethna, James P. (2006). Statistical mechanics : entropy, order parameters, and complexity. ([Online-Ausg.]. ed.). Oxford: Oxford University Press. p. 78. ISBN 978-0198566779.

- ↑ Clark, John O.E. (2004). The essential dictionary of science. New York: Barnes & Noble. ISBN 978-0760746165.

- 1 2 3 Frigg, R. and Werndl, C. "Entropy – A Guide for the Perplexed". In Probabilities in Physics; Beisbart C. and Hartmann, S. Eds; Oxford University Press, Oxford, 2010

- ↑ Schroeder, Daniel V. (2000). An introduction to thermal physics ([Nachdr.] ed.). San Francisco, CA [u.a.]: Addison Wesley. p. 57. ISBN 9780201380279.

- ↑ "EntropyOrderParametersComplexity.pdf www.physics.cornell.edu" (PDF). Retrieved 2012-08-17.

- ↑ "Jaynes, E. T., "The Gibbs Paradox," In Maximum Entropy and Bayesian Methods; Smith, C. R; Erickson, G. J; Neudorfer, P. O., Eds; Kluwer Academic: Dordrecht, 1992, pp. 1–22" (PDF). Retrieved 2012-08-17.

- 1 2 Sandler, Stanley I. (2006). Chemical, biochemical, and engineering thermodynamics (4th ed.). New York: John Wiley & Sons. p. 91. ISBN 978-0471661740.

- ↑ Simon, Donald A. McQuarrie; John D. (1997). Physical chemistry : a molecular approach (Rev. ed.). Sausalito, Calif.: Univ. Science Books. p. 817. ISBN 9780935702996.

- ↑ Haynie, Donald, T. (2001). Biological Thermodynamics. Cambridge University Press. ISBN 0-521-79165-0.

- ↑ Daintith, John (2005). A dictionary of science (5th ed.). Oxford: Oxford University Press. ISBN 9780192806413.

- ↑ de Rosnay, Joel (1979). The Macroscope – a New World View (written by an M.I.T.-trained biochemist). Harper & Row, Publishers. ISBN 0-06-011029-5.

- ↑ J. A. McGovern,"Heat Capacities". Archived from the original on 2012-08-19. Retrieved 2013-01-27.

- ↑ Ben-Naim, Arieh (21 September 2007). "On the So-Called Gibbs Paradox, and on the Real Paradox" (PDF). Entropy. 9 (3): 132–136. doi:10.3390/e9030133.

- ↑ Daintith, John (2005). Oxford Dictionary of Physics. Oxford University Press. ISBN 0-19-280628-9.

- ↑ ""Entropy production theorems and some consequences," Physical Review E; Saha, Arnab; Lahiri, Sourabh; Jayannavar, A. M; The American Physical Society: 14 July 2009, pp. 1–10". Link.aps.org. Retrieved 2012-08-17.

- 1 2 Moore, J. W.; C. L. Stanistski; P. C. Jurs (2005). Chemistry, The Molecular Science. Brooks Cole. ISBN 0-534-42201-2.

- ↑ Jungermann, A.H. (2006). "Entropy and the Shelf Model: A Quantum Physical Approach to a Physical Property". Journal of Chemical Education. 83 (11): 1686–1694. Bibcode:2006JChEd..83.1686J. doi:10.1021/ed083p1686.

- ↑ Levine, I. N. (2002). Physical Chemistry, 5th ed. McGraw-Hill. ISBN 0-07-231808-2.

- ↑ Late Nobel Laureate Max Born (8 August 2015). Natural Philosophy of Cause and Chance. BiblioLife. pp. 44, 146–147. ISBN 978-1-298-49740-6.

- ↑ Haase, R. (1971). Thermodynamics. New York: Academic Press. pp. 1–97. ISBN 0122456017.

- ↑ Sandler, Stanley, I. (1989). Chemical and Engineering Thermodynamics. John Wiley & Sons. ISBN 0-471-83050-X.

- ↑ "GRC.nasa.gov". GRC.nasa.gov. 2000-03-27. Retrieved 2012-08-17.

- ↑ Franzen, Stefan. "Third Law." (PDF). ncsu.ed.

- ↑ "GRC.nasa.gov". GRC.nasa.gov. 2008-07-11. Retrieved 2012-08-17.

- ↑ Gribbin, John (1999). Gribbin, Mary, ed. Q is for quantum : an encyclopedia of particle physics. New York: Free Press. ISBN 0-684-85578-X.

- ↑ "Entropy: Definition and Equation". Encyclopedia Britannica. Retrieved 22 May 2016.

- 1 2 Brooks, Daniel R.; Wiley, E. O. (1988). Evolution as entropy : toward a unified theory of biology (2nd ed.). Chicago [etc.]: University of Chicago Press. ISBN 0-226-07574-5.

- 1 2 Landsberg, P.T. (1984). "Is Equilibrium always an Entropy Maximum?". J. Stat. Physics. 35: 159–169. Bibcode:1984JSP....35..159L. doi:10.1007/bf01017372.

- 1 2 Landsberg, P.T. (1984). "Can Entropy and "Order" Increase Together?". Physics Letters. 102A: 171–173. Bibcode:1984PhLA..102..171L. doi:10.1016/0375-9601(84)90934-4.

- ↑ Lambert, Frank L. "A Student's Approach to the Second Law and Entropy". entropysite.oxy.edu. Archived from the original on 17 July 2009. Retrieved 22 May 2016.

- ↑ Watson, J.R.; Carson, E.M. (May 2002). "Undergraduate students' understandings of entropy and Gibbs free energy." (PDF). University Chemistry Education. 6 (1): 4. ISSN 1369-5614.

- ↑ Lambert, Frank L. (February 2002). "Disorder - A Cracked Crutch for Supporting Entropy Discussions". Journal of Chemical Education. 79 (2): 187. doi:10.1021/ed079p187.

- ↑ Atkins, Peter (1984). The Second Law. Scientific American Library. ISBN 0-7167-5004-X.

- ↑ Sandra Saary (Head of Science, Latifa Girls’ School, Dubai) (23 February 1993). "Book Review of "A Science Miscellany"". Khaleej Times. Galadari Press, UAE: XI.

- ↑ Lathia, R; Agrawal, T; Parmar, V; Dobariya, K; Patel, A (2015-10-20). "Heat Death (The Ultimate Fate of the Universe)". doi:10.13140/rg.2.1.4158.2485.

- ↑ Lieb, Elliott H.; Yngvason, Jakob (March 1999). "The physics and mathematics of the second law of thermodynamics". Physics Reports. 310 (1): 1–96. doi:10.1016/S0370-1573(98)00082-9.

- ↑ Carathéodory, C. (September 1909). "Untersuchungen über die Grundlagen der Thermodynamik". Mathematische Annalen (in German). 67 (3): 355–386. doi:10.1007/BF01450409.

- ↑ R. Giles (22 January 2016). Mathematical Foundations of Thermodynamics: International Series of Monographs on Pure and Applied Mathematics. Elsevier Science. ISBN 978-1-4831-8491-3.

- ↑ M. Tribus, E.C. McIrvine, Energy and information, Scientific American, 224 (September 1971), pp. 178–184

- ↑ Balian, Roger (2004). "Entropy, a Protean concept". In Dalibard, Jean. Poincaré Seminar 2003: Bose-Einstein condensation - entropy. Basel: Birkhäuser. pp. 119–144. ISBN 9783764371166.

- ↑ Brillouin, Leon (1956). Science and Information Theory. ISBN 0-486-43918-6.

- ↑ Georgescu-Roegen, Nicholas (1971). The Entropy Law and the Economic Process. Harvard University Press. ISBN 0-674-25781-2.

- ↑ Chen, Jing (2005). The Physical Foundation of Economics – an Analytical Thermodynamic Theory. World Scientific. ISBN 981-256-323-7.

- ↑ Kalinin, M.I.; Kononogov, S.A. (2005). "Boltzmann's constant". Measurement Techniques. 48: 632–636. doi:10.1007/s11018-005-0195-9.

- ↑ Ben-Naim, Arieh (2008). Entropy demystified the second law reduced to plain common sense (Expanded ed.). Singapore: World Scientific. ISBN 9789812832269.

- ↑ "Edwin T. Jaynes – Bibliography". Bayes.wustl.edu. 1998-03-02. Retrieved 2009-12-06.

- ↑ Schneider, Tom, DELILA system (Deoxyribonucleic acid Library Language), (Information Theory Analysis of binding sites), Laboratory of Mathematical Biology, National Cancer Institute, FCRDC Bldg. 469. Rm 144, P.O. Box. B Frederick, MD 21702-1201, USA

- ↑ Brooks, Daniel, R.; Wiley, E.O. (1988). Evolution as Entropy– Towards a Unified Theory of Biology. University of Chicago Press. ISBN 0-226-07574-5.

- ↑ Avery, John (2003). Information Theory and Evolution. World Scientific. ISBN 981-238-399-9.

- ↑ Yockey, Hubert, P. (2005). Information Theory, Evolution, and the Origin of Life. Cambridge University Press. ISBN 0-521-80293-8.

- ↑ Chiavazzo, Eliodoro; Fasano, Matteo; Asinari, Pietro. "Inference of analytical thermodynamic models for biological networks". Physica A: Statistical Mechanics and its Applications. 392: 1122–1132. Bibcode:2013PhyA..392.1122C. doi:10.1016/j.physa.2012.11.030.

- ↑ Chen, Jing (2015). The Unity of Science and Economics: A New Foundation of Economic Theory. http://www.springer.com/us/book/9781493934645: Springer.

- ↑ Chiavazzo, Eliodoro; Isaia, Marco; Mammola, Stefano; Lepore, Emiliano; Ventola, Luigi; Asinari, Pietro; Pugno, Nicola Maria. "Cave spiders choose optimal environmental factors with respect to the generated entropy when laying their cocoon". Scientific Reports. 5: 7611. Bibcode:2015NatSR...5E7611C. doi:10.1038/srep07611.

- ↑ IUPAC, Compendium of Chemical Terminology, 2nd ed. (the "Gold Book") (1997). Online corrected version: (2006–) "Entropy unit".

- ↑ von Baeyer, Christian, H. (2003). Information–the New Language of Science. Harvard University Press. ISBN 0-674-01387-5.Srednicki M (August 1993). "Entropy and area". Phys. Rev. Lett. 71 (5): 666–669. arXiv:hep-th/9303048

. Bibcode:1993PhRvL..71..666S. doi:10.1103/PhysRevLett.71.666. PMID 10055336.Callaway DJE (April 1996). "Surface tension, hydrophobicity, and black holes: The entropic connection". Phys. Rev. E. 53 (4): 3738–3744. arXiv:cond-mat/9601111

. Bibcode:1993PhRvL..71..666S. doi:10.1103/PhysRevLett.71.666. PMID 10055336.Callaway DJE (April 1996). "Surface tension, hydrophobicity, and black holes: The entropic connection". Phys. Rev. E. 53 (4): 3738–3744. arXiv:cond-mat/9601111 . Bibcode:1996PhRvE..53.3738C. doi:10.1103/PhysRevE.53.3738. PMID 9964684.

. Bibcode:1996PhRvE..53.3738C. doi:10.1103/PhysRevE.53.3738. PMID 9964684. - ↑ Buchan, Lizzy. "Black holes do not exist, says Stephen Hawking". Cambridge News. Retrieved 27 January 2014.

- ↑ Layzer, David (1988). Growth of Order in the Universe. MIT Press.

- ↑ Chaisson, Eric J. (2001). Cosmic Evolution: The Rise of Complexity in Nature. Harvard University Press. ISBN 0-674-00342-X.

- ↑ Lineweaver, Charles H.; Davies, Paul C. W.; Ruse, Michael, eds. (2013). Complexity and the Arrow of Time. Cambridge University Press. ISBN 978-1-107-02725-1.

- ↑ Stenger, Victor J. (2007). God: The Failed Hypothesis. Prometheus Books. ISBN 1-59102-481-1.

- ↑ Benjamin Gal-Or (1981, 1983, 1987). Cosmology, Physics and Philosophy. Springer Verlag. ISBN 0-387-96526-2. Check date values in:

|date=(help) - ↑ Georgescu-Roegen, Nicholas (1971). The Entropy Law and the Economic Process. (Full book accessible in three parts at SlideShare). Cambridge, Massachusetts: Harvard University Press. ISBN 0674257804.

- ↑ Cleveland, Cutler J.; Ruth, Matthias (1997). "When, where, and by how much do biophysical limits constrain the economic process? A survey of Nicholas Georgescu-Roegen's contribution to ecological economics" (PDF). Ecological Economics. Amsterdam: Elsevier. 22 (3): 203–223. doi:10.1016/s0921-8009(97)00079-7. Archived from the original (PDF) on 2015-12-08.

- ↑ Daly, Herman E.; Farley, Joshua (2011). Ecological Economics. Principles and Applications. (PDF contains full book) (2nd ed.). Washington: Island Press. ISBN 9781597266819.

- ↑ Schmitz, John E.J. (2007). The Second Law of Life: Energy, Technology, and the Future of Earth As We Know It. (Link to the author's science blog, based on his textbook). Norwich: William Andrew Publishing. ISBN 0815515375.

- ↑ Ayres, Robert U. (2007). "On the practical limits to substitution" (PDF). Ecological Economics. Amsterdam: Elsevier. 61: 115–128. doi:10.1016/j.ecolecon.2006.02.011.

- ↑ Kerschner, Christian (2010). "Economic de-growth vs. steady-state economy" (PDF). Journal of Cleaner Production. Amsterdam: Elsevier. 18: 544–551. doi:10.1016/j.jclepro.2009.10.019.

Further reading

- Atkins, Peter; Julio De Paula (2006). Physical Chemistry (8th ed.). Oxford University Press. ISBN 0-19-870072-5.

- Baierlein, Ralph (2003). Thermal Physics. Cambridge University Press. ISBN 0-521-65838-1.

- Ben-Naim, Arieh (2007). Entropy Demystified. World Scientific. ISBN 981-270-055-2.

- Callen, Herbert, B (2001). Thermodynamics and an Introduction to Thermostatistics (2nd ed.). John Wiley and Sons. ISBN 0-471-86256-8.

- Chang, Raymond (1998). Chemistry (6th ed.). New York: McGraw Hill. ISBN 0-07-115221-0.

- Cutnell, John, D.; Johnson, Kenneth, J. (1998). Physics (4th ed.). John Wiley and Sons, Inc. ISBN 0-471-19113-2.

- Dugdale, J. S. (1996). Entropy and its Physical Meaning (2nd ed.). Taylor and Francis (UK); CRC (US). ISBN 0-7484-0569-0.

- Fermi, Enrico (1937). Thermodynamics. Prentice Hall. ISBN 0-486-60361-X.

- Goldstein, Martin; Inge, F (1993). The Refrigerator and the Universe. Harvard University Press. ISBN 0-674-75325-9.

- Gyftopoulos, E.P.; G.P. Beretta (1991, 2005, 2010). Thermodynamics. Foundations and Applications. Dover. ISBN 0-486-43932-1. Check date values in:

|date=(help) - Haddad, Wassim M.; Chellaboina, VijaySekhar; Nersesov, Sergey G. (2005). Thermodynamics – A Dynamical Systems Approach. Princeton University Press. ISBN 0-691-12327-6.

- Kroemer, Herbert; Charles Kittel (1980). Thermal Physics (2nd ed.). W. H. Freeman Company. ISBN 0-7167-1088-9.

- Lambert, Frank L.; entropysite.oxy.edu

- Müller-Kirsten, Harald J.W. (2013). Basics of Statistical Physics (2nd ed.). Singapore: World Scientific. ISBN 978-981-4449-53-3.

- Penrose, Roger (2005). The Road to Reality: A Complete Guide to the Laws of the Universe. New York: A. A. Knopf. ISBN 0-679-45443-8.

- Reif, F. (1965). Fundamentals of statistical and thermal physics. McGraw-Hill. ISBN 0-07-051800-9.

- Schroeder, Daniel V. (2000). Introduction to Thermal Physics. New York: Addison Wesley Longman. ISBN 0-201-38027-7.

- Serway, Raymond, A. (1992). Physics for Scientists and Engineers. Saunders Golden Subburst Series. ISBN 0-03-096026-6.

- Spirax-Sarco Limited, Entropy – A Basic Understanding A primer on entropy tables for steam engineering

- vonBaeyer; Hans Christian (1998). Maxwell's Demon: Why Warmth Disperses and Time Passes. Random House. ISBN 0-679-43342-2.

- Entropy for beginners – a wikibook

- An Intuitive Guide to the Concept of Entropy Arising in Various Sectors of Science – a wikibook

External links

| Look up entropy in Wiktionary, the free dictionary. |

- Entropy and the Second Law of Thermodynamics - an A-level physics lecture with detailed derivation of entropy based on Carnot cycle

- Khan Academy: entropy lectures, part of Chemistry playlist

- The Second Law of Thermodynamics and Entropy - Yale OYC lecture, part of Fundamentals of Physics I (PHYS 200)

- Entropy and the Clausius inequality MIT OCW lecture, part of 5.60 Thermodynamics & Kinetics, Spring 2008

- The Discovery of Entropy by Adam Shulman. Hour-long video, January 2013.

- Moriarty, Philip; Merrifield, Michael (2009). "S Entropy". Sixty Symbols. Brady Haran for the University of Nottingham.

- Entropy Scholarpedia