Defragmentation

In the maintenance of file systems, defragmentation is a process that reduces the amount of fragmentation. It does this by physically organizing the contents of the mass storage device used to store files into the smallest number of contiguous regions (fragments). It also attempts to create larger regions of free space using compaction to impede the return of fragmentation. Some defragmentation utilities try to keep smaller files within a single directory together, as they are often accessed in sequence.

Defragmentation is advantageous and relevant to file systems on electromechanical disk drives. The movement of the hard drive's read/write heads over different areas of the disk when accessing fragmented files is slower, compared to accessing the entire contents of a non-fragmented file sequentially without moving the read/write heads to seek other fragments.

Causes of fragmentation

Fragmentation occurs when the file system cannot or will not allocate enough contiguous space to store a complete file as a unit, but instead puts parts of it in gaps between existing files (usually those gaps exist because they formerly held a file that the file system has subsequently deleted or because the file system allocated excess space for the file in the first place). Files that are often appended to (as with log files) as well as the frequent adding and deleting of files (as with emails and web browser cache), larger files (as with videos) and greater numbers of files contribute to fragmentation and consequent performance loss. Defragmentation attempts to alleviate these problems.

Example

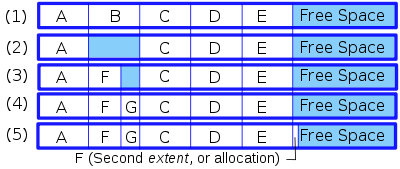

Consider the following scenario, as shown by the image on the right:

An otherwise blank disk has five files, A through E, each using 10 blocks of space (for this section, a block is an allocation unit of the filesystem; the block size is set when the disk is formatted and can be any size supported by the filesystem). On a blank disk, all of these files would be allocated one after the other (see example 1 in the image). If file B were to be deleted, there would be two options: mark the space for file B as empty to be used again later, or move all the files after B so that the empty space is at the end. Since moving the files could be time consuming if there were many files which need to be moved, usually the empty space is simply left there, marked in a table as available for new files (see example 2 in the image).[1] When a new file, F, is allocated requiring 6 blocks of space, it could be placed into the first 6 blocks of the space that formerly held file B, and the 4 blocks following it will remain available (see example 3 in the image). If another new file, G, is added and needs only 4 blocks, it could then occupy the space after F and before C (example 4 in the image). However, if file F needs to be expanded, there are three options, since the space immediately following it is no longer available:

- Move the file F to where it can be created as one contiguous file of the new, larger size. This would not be possible if the file is larger than the largest contiguous space available. The file could also be so large that the operation would take an undesirably long period of time.

- Move all the files after F until one opens enough space to make it contiguous again. Same problem as in the previous example, if there are a small number of files or not much data to move, it's not a big problem. If there are thousands, or tens of thousands, there isn't enough time to move all those files.

- Add a new block somewhere else, and indicate that F has a second extent (see example 5 in the image). Repeat this hundreds of times and the filesystem will have a number of small free segments scattered in many places, and some files will have multiple extents. When a file has many extents like this, access time for that file may become excessively long because of all the random seeking the disk will have to do when reading it.

Additionally, the concept of “fragmentation” is not only limited to individual files that have multiple extents on the disk. For instance, a group of files normally read in a particular sequence (like files accessed by a program when it is loading, which can include certain DLLs, various resource files, the audio/visual media files in a game) can be considered fragmented if they are not in sequential load-order on the disk, even if these individual files are not fragmented; the read/write heads will have to seek these (defragmented) files randomly to access them in sequence. Some groups of files may have been originally installed in the correct sequence, but drift apart with time as certain files within the group are deleted. Updates are a common cause of this, because in order to update a file, most updaters usually delete the old file first, and then write a new, updated one in its place. However, most filesystems do not write the new file in the same physical place on the disk. This allows unrelated files to fill in the empty spaces left behind. In Windows, a good defragmenter will read the Prefetch files to identify as many of these file groups as possible and place the files within them in access sequence. Another frequently good assumption is that files in any given folder are related to each other and might be accessed together.

To defragment a disk, defragmentation software (also known as a "defragmenter") can only move files around within the free space available. This is an intensive operation and cannot be performed on a filesystem with little or no free space. During defragmentation, system performance will be degraded, and it is best to leave the computer alone during the process so that the defragmenter does not get confused by unexpected changes to the filesystem. Depending on the algorithm used it may or may not be advantageous to perform multiple passes. The reorganization involved in defragmentation does not change logical location of the files (defined as their location within the directory structure).

Common countermeasures

Partitioning

A common strategy to optimize defragmentation and to reduce the impact of fragmentation is to partition the hard disk(s) in a way that separates partitions of the file system that experience many more reads than writes from the more volatile zones where files are created and deleted frequently. The directories that contain the users' profiles are modified constantly (especially with the Temp directory and web browser cache creating thousands of files that are deleted in a few days). If files from user profiles are held on a dedicated partition (as is commonly done on UNIX recommended files systems, where it is typically stored in the /var partition), the defragmenter runs better since it does not need to deal with all the static files from other directories. For partitions with relatively little write activity, defragmentation time greatly improves after the first defragmentation, since the defragmenter will need to defragment only a small number of new files in the future.

Offline defragmentation

The presence of immovable system files, especially a swap file, can impede defragmentation. These files can be safely moved when the operating system is not in use. For example, ntfsresize moves these files to resize an NTFS partition. The tool PageDefrag could defragment Windows system files such as the swap file and the files that store the Windows registry by running at boot time before the GUI is loaded. Since Windows Vista, the feature is not fully supported and has not been updated.

In NTFS, as files are added to the disk, the Master File Table (MFT) must grow to store the information for the new files. Every time the MFT cannot be extended due to some file being in the way, the MFT will gain a fragment. In early versions of Windows, it could not be safely defragmented while the partition was mounted, and so Microsoft wrote a hardblock in the defragmenting API. However, since Windows XP, an increasing number of defragmenters are now able to defragment the MFT, because the Windows defragmentation API has been improved and now supports that move operation.[2] Even with the improvements, the first four clusters of the MFT remain unmovable by the Windows defragmentation API, resulting in the fact that some defragmenters will store the MFT in two fragments: The first four clusters wherever they were placed when the disk was formatted, and then the rest of the MFT at the beginning of the disk (or wherever the defragmenter's strategy deems to be the best place).

User and performance issues

In a wide range of modern multi-user operating systems, an ordinary user cannot defragment the system disks since superuser (or "Administrator") access is required to move system files. Additionally, file systems such as NTFS are designed to decrease the likelihood of fragmentation.[3][4] Improvements in modern hard drives such as RAM cache, faster platter rotation speed, command queuing (SCSI/ATA TCQ or SATA NCQ), and greater data density reduce the negative impact of fragmentation on system performance to some degree, though increases in commonly used data quantities offset those benefits. However, modern systems profit enormously from the huge disk capacities currently available, since partially filled disks fragment much less than full disks,[5] and on a high-capacity HDD, the same partition occupies a smaller range of cylinders, resulting in faster seeks. However, the average access time can never be lower than a half rotation of the platters, and platter rotation (measured in rpm) is the speed characteristic of HDDs which has experienced the slowest growth over the decades (compared to data transfer rate and seek time), so minimizing the number of seeks remains beneficial in most storage-heavy applications. Defragmentation is just that: ensuring that there is at most one seek per file, counting only the seeks to non-adjacent tracks.

Flash memory vs conventional hard disks

When reading data from a conventional electromechanical hard disk drive, the disk controller must first position the head, relatively slowly, to the track where a given fragment resides, and then wait while the disk platter rotates until the fragment reaches the head. A solid-state drive (SSD) is based on flash memory with no moving parts, so random access of a file fragment on flash memory does not suffer this delay, making defragmentation to optimize access speed unnecessary. Furthermore, since flash memory can be written to only a limited number of times before it fails, defragmentation is actually detrimental (except in the mitigation of catastrophic failure). However, Windows still defragments a SSD automatically (albeit less vigorously) to prevent file system from reaching its maximum fragmentation tolerance. Once the maximum fragmentation limit is reached, subsequent attempts to write to disk fail.[6]

Windows System Restore points may be deleted during defragmenting/optimizing

Running most defragmenters and optimizers can cause the Microsoft Shadow Copy service to delete some of the oldest restore points, even if the defragmenters/optimizers are built on Windows API. This is due to Shadow Copy keeping track of some movements of big files performed by the defragmenters/optimizers; when the total disk space used by shadow copies would exceed a specified threshold, older restore points are deleted until the limit is not exceeded.[7]

Defragmenting and optimizing

Besides defragmenting program files, the defragmenting tool can also reduce the time it takes to load programs and open files. For example, the Windows 9x defragmenter included the Intel Application Launch Accelerator which optimized programs on the disk by placing the defragmented program files and their dependencies next to each other, in the order of which the program loads them, to load these programs faster.[8] At the beginning of the hard drive, the outer tracks have a higher transfer rate than the inner tracks. Placing frequently accessed files onto the outer tracks increases performance.[9] Third party defragmenters, such as MyDefrag, will move frequently accessed files onto the outer tracks and defragment these files.[10]

Approach and defragmenters by file-system type

- FAT: MS-DOS 6.x and Windows 9x-systems come with a defragmentation utility called Defrag. The DOS version is a limited version of Norton SpeedDisk.[11] The version that came with Windows 9x was licensed from Symantec Corporation, and the version that came with Windows 2000 and XP is licensed from Condusiv Technologies.

- NTFS was introduced with Windows NT 3.1, but the NTFS filesystem driver did not include any defragmentation capabilities.[12] In Windows NT 4.0, defragmenting APIs were introduced that third-party tools could use to perform defragmentation tasks; however, no defragmentation software was included. In Windows 2000, Windows XP and Windows Server 2003, Microsoft included a defragmentation tool based on Diskeeper[13] that made use of the defragmentation APIs and was a snap-in for Computer Management. In Windows Vista, Windows 7 and Windows 8, the tool has been greatly improved and was given a new interface with no visual diskmap and is no longer part of Computer Management.[14][15] There are also a number of free and commercial third-party defragmentation products available for Microsoft Windows.

- BSD UFS and particularly FreeBSD uses an internal reallocator that seeks to reduce fragmentation right in the moment when the information is written to disk.[16] This effectively controls system degradation after extended use.

- Btrfs has online and automatic defragmentation available.[17]

- Linux ext2, ext3, and ext4: Much like UFS, these filesystems employ allocation techniques designed to keep fragmentation under control at all times.[18] As a result, defragmentation is not needed in the vast majority of cases.[19] ext2 uses an offline defragmenter called e2defrag, which does not work with its successor ext3. However, other programs, or filesystem-independent ones such as defragfs,[20] may be used to defragment an ext3 filesystem. ext4 is somewhat backward compatible with ext3, and thus has generally the same amount of support from defragmentation programs. Currently e4defrag can be used to defragment an ext4 filesystem, including online defragmentation.

- VxFS has the fsadm utility that includes defrag operations.

- JFS has the defragfs utility on IBM operating systems.[21]

- HFS Plus introduced in 1998 with Mac OS 8.1 has a number of optimizations to the allocation algorithms in an attempt to defragment files while they are being accessed without a separate defragmenter.[22] There are several restrictions for files to be candidates for 'on-the-fly' defragmentation (including a maximum size 20MB). There is a utility, iDefrag, by Coriolis Systems available since OS X 10.3.

- WAFL in NetApp's ONTAP 7.2 operating system has a command called reallocate that is designed to defragment large files.

- XFS provides an online defragmentation utility called xfs_fsr.

- SFS processes the defragmentation feature in almost completely stateless way (apart from the location it is working on), so defragmentation can be stopped and started instantly.[23]

- ADFS, the file system used by RISC OS and earlier Acorn Computers, keeps file fragmentation under control without requiring manual defragmentation.[24]

See also

- Comparison of defragmentation software

- Fragmentation (computing)

- File system fragmentation

- List of defragmentation software

- Virtual disk image

- Wear levelling, a similar technique for prolonging flash memory content

References

- ↑ The practice of marking the now unused space of a deleted file in a table as available for later use (without erasing its contents), is why undelete programs are able to work; they recover files whose names have been deleted from the directory, but whose space has not yet been reused.

- ↑ msdn.microsoft.com: "The other big enhancement [in windows XP] is support for online defragmentation of the MFT and most directory and file metadata"

- ↑ Serdar Yegulalp (18 September 2006). "Disk defragmentation: Performance-sapping bogeyman, or best practice?". SearchWindowsServer.com: Disk Defragmentation Fast Guide. Retrieved 2008-12-27.

One of the many improvements NTFS provided was a reduced propensity for fragmentation.

- ↑ Darcy, Jeff (19 April 2002). "Filesystem Fragmentation". Canned Platypus.

UNIX filesystems tend to do a lot to prevent fragmentation and generally reduce head motion - preallocation, cylinder groups, blah blah blah - but it does still occur and most filesystems don’t actually do all that much to undo it once it exists.

- ↑ Serdar Yegulalp (20 September 2005). "New hard disk drives reduce need for disk defragmentation". SearchWindowsServer.com: Disk Defragmentation Fast Guide. Retrieved 2008-12-27.

- ↑ Hanselman, Scott (3 December 2014). "The real and complete story - Does Windows defragment your SSD?". Scott Hanselman's blog. Microsoft.

- ↑ Jeroen Kessels (21 May 2010). "FAQ Using - Why do I have more diskspace after running MyDefrag?". Retrieved 2010-10-11.

- ↑ Cwdixon.com. Cwdixon.com. Retrieved on 2013-07-28.

- ↑ The Ultimate Defragger - LaRud's Place. Larud.net (2012-01-19). Retrieved on 2013-07-28.

- ↑ http://www.mydefrag.com/index.html On most harddisks the beginning of the harddisk is considerably faster than the end, sometimes by as much as 200 percent! You can measure this yourself with utilities such as HD Tune. MyDefrag is therefore geared towards moving all files to the beginning of the disk.

- ↑ Norton, Peter (October 1994). Peter Norton's Complete Guide to DOS 6.22. Sams. p. 521.

- ↑ M. Kozierok, Charles (2001-04-17). "NTFS Versions". PC Guide. Retrieved 2015-02-20.

- ↑ Third-party disk defragmenter tools for Windows. Support.microsoft.com (2011-08-23). Retrieved on 2013-07-28.

- ↑ "Disk Defragmentation – Background and Engineering the Windows 7 Improvements". Retrieved 2014-06-15.

- ↑ "New Defrag options in Windows 8". Retrieved 2014-06-15.

- ↑ "FreeBSD Man Pages". The FreeBSD Project. Retrieved 21 February 2015.

- ↑ "Linux kernel 3.0, Section 1.1. Btrfs: Automatic defragmentation, scrubbing, performance improvements". kernelnewbies.org. 2011-07-21. Retrieved 2016-04-05.

- ↑ "HTG Explains: Why Linux Doesn't Need Defragmenting". How-To Geek. Retrieved 2013-08-01.

- ↑ 5.10. Filesystems. Tldp.org (2002-11-09). Retrieved on 2013-06-22.

- ↑ Erik Bärwaldt: Optimizing data organization on disk

- ↑ "Journaling File System Support". eComStation. Retrieved 2008-12-27.

- ↑ "Fragmentation in HFS Plus Volumes".

As we have seen, an HFS+ volume seems to resist fragmentation rather well on Mac OS X 10.3.x, and I don't envision fragmentation to be a problem bad enough to require proactive remedies (such as a defragmenting tool).

- ↑ "Detecting a file fragmentation point for reconstructing fragmented files using sequential hypothesis testing". US8407192 B2. Retrieved 21 February 2015.

- ↑ Reeves, Nick (26 October 1990). "E format design document". Retrieved 24 May 2013.

Sources

- Norton, Peter (1994) Peter Norton's Complete Guide to DOS 6.22, page 521 – Sams (ISBN 067230614X)

- Woody Leonhard, Justin Leonhard (2005) Windows XP Timesaving Techniques For Dummies, Second Edition page 456 – For Dummies (ISBN 0-764578-839).

- Jensen, Craig (1994). Fragmentation: The Condition, the Cause, the Cure. Executive Software International (ISBN 0-9640049-0-9).

- Dave Kleiman, Laura Hunter, Mahesh Satyanarayana, Kimon Andreou, Nancy G Altholz, Lawrence Abrams, Darren Windham, Tony Bradley and Brian Barber (2006) Winternals: Defragmentation, Recovery, and Administration Field Guide – Syngress (ISBN 1-597490-792)

- Robb, Drew (2003) Server Disk Management in a Windows Environment Chapter 7 – AUERBACH (ISBN 0849324327)

External links

- The Big Windows 7 Defragmenter Test Benchmarks of popular defrag utilities

- Microsoft Windows XP defragmentation - How to schedule a weekly defragmentation

- Microsoft Windows 2000 Professional and Server defragmentation - How to schedule defragmentation

- SST Hard Disk Optimizer

- How Linux avoids making files fragmented

- How defragmentation was changed for Windows 7

- Complete list of Defragmentation Utilities for Windows

- Does Your SSD's File System Affect Performance?