Arithmetic logic unit

An arithmetic logic unit (ALU) is a combinational digital electronic circuit that performs arithmetic and bitwise operations on integer binary numbers. This is in contrast to a floating-point unit (FPU), which operates on floating point numbers. An ALU is a fundamental building block of many types of computing circuits, including the central processing unit (CPU) of computers, FPUs, and graphics processing units (GPUs). A single CPU, FPU or GPU may contain multiple ALUs.

The inputs to an ALU are the data to be operated on, called operands, and a code indicating the operation to be performed and, optionally, status information from a previous operation; the ALU's output is the result of the performed operation. In many designs, the ALU also exchanges additional information with a status register, which relates to the result of the current or previous operations.

Signals

An ALU has a variety of input and output nets, which are the electrical conductors used to convey digital signals between the ALU and external circuitry. When an ALU is operating, external circuits apply signals to the ALU inputs and, in response, the ALU produces and conveys signals to external circuitry via its outputs.

Data

A basic ALU has three parallel data buses consisting of two input operands (A and B) and a result output (Y). Each data bus is a group of signals that conveys one binary integer number. Typically, the A, B and Y bus widths (the number of signals comprising each bus) are identical and match the native word size of the external circuitry (e.g., the encapsulating CPU or other processor).

Opcode

The opcode input is a parallel bus that conveys to the ALU an operation selection code, which is an enumerated value that specifies the desired arithmetic or logic operation to be performed by the ALU. The opcode size (its bus width) is related to the number of different operations the ALU can perform; for example, a four-bit opcode can specify up to sixteen different ALU operations. Generally, an ALU opcode is not the same as a machine language opcode, though in some cases it may be directly encoded as a bit field within a machine language opcode.

Status

The status outputs are various individual signals that convey supplemental information about the result of an ALU operation. These outputs are usually stored in registers so they can be used in future ALU operations or for controlling conditional branching. The collection of bit registers that store the status outputs are often treated as a single, multi-bit register, which is referred to as the "status register" or "condition code register". General-purpose ALUs commonly have status signals such as:

- Carry-out, which conveys the carry resulting from an addition operation, the borrow resulting from a subtraction operation, or the overflow bit resulting from a binary shift operation.

- Zero, which indicates all bits of output are logic zero.

- Negative, which indicates the result of an arithmetic operation is negative.

- Overflow, which indicates the result of an arithmetic operation has exceeded the numeric range of output.

- Parity, which indicates whether an even or odd number of bits in the output are logic one.

The status input allows additional information to be made available to the ALU when performing an operation. Typically, this is a "carry-in" bit that is the stored carry-out from a previous ALU operation.

Circuit operation

An ALU is a combinational logic circuit, meaning that its outputs will change asynchronously in response to input changes. In normal operation, stable signals are applied to all of the ALU inputs and, when enough time (known as the "propagation delay") has passed for the signals to propagate through the ALU circuitry, the result of the ALU operation appears at the ALU outputs. The external circuitry connected to the ALU is responsible for ensuring the stability of ALU input signals throughout the operation, and for allowing sufficient time for the signals to propagate through the ALU before sampling the ALU result.

In general, external circuitry controls an ALU by applying signals to its inputs. Typically, the external circuitry employs sequential logic to control the ALU operation, which is paced by a clock signal of a sufficiently low frequency to ensure enough time for the ALU outputs to settle under worst-case conditions.

For example, a CPU begins an ALU addition operation by routing operands from their sources (which are usually registers) to the ALU's operand inputs, while the control unit simultaneously applies a value to the ALU's opcode input, configuring it to perform addition. At the same time, the CPU also routes the ALU result output to a destination register that will receive the sum. The ALU's input signals, which are held stable until the next clock, are allowed to propagate through the ALU and to the destination register while the CPU waits for the next clock. When the next clock arrives, the destination register stores the ALU result and, since the ALU operation has completed, the ALU inputs may be set up for the next ALU operation.

Functions

A number of basic arithmetic and bitwise logic functions are commonly supported by ALUs. Basic, general purpose ALUs typically include these operations in their repertoires:

Arithmetic operations

- Add: A and B are summed and the sum appears at Y and carry-out.

- Add with carry: A, B and carry-in are summed and the sum appears at Y and carry-out.

- Subtract: B is subtracted from A (or vice versa) and the difference appears at Y and carry-out. For this function, carry-out is effectively a "borrow" indicator. This operation may also be used to compare the magnitudes of A and B; in such cases the Y output may be ignored by the processor, which is only interested in the status bits (particularly zero and negative) that result from the operation.

- Subtract with borrow: B is subtracted from A (or vice versa) with borrow (carry-in) and the difference appears at Y and carry-out (borrow out).

- Two's complement (negate): A (or B) is subtracted from zero and the difference appears at Y.

- Increment: A (or B) is increased by one and the resulting value appears at Y.

- Decrement: A (or B) is decreased by one and the resulting value appears at Y.

- Pass through: all bits of A (or B) appear unmodified at Y. This operation is typically used to determine the parity of the operand or whether it is zero or negative.

Bitwise logical operations

- AND: the bitwise AND of A and B appears at Y.

- OR: the bitwise OR of A and B appears at Y.

- Exclusive-OR: the bitwise XOR of A and B appears at Y.

- One's complement: all bits of A (or B) are inverted and appear at Y.

Bit shift operations

| Type | Left shift | Right shift |

|---|---|---|

| Arithmetic |  |

|

| Logical |  |

|

| Rotate |  |

|

| Rotate through carry |  |

|

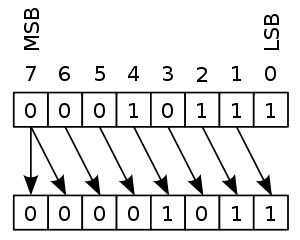

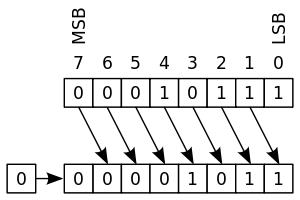

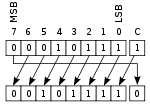

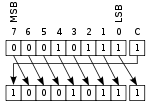

ALU shift operations cause operand A (or B) to shift left or right (depending on the opcode) and the shifted operand appears at Y. Simple ALUs typically can shift the operand by only one bit position, whereas more complex ALUs employ barrel shifters that allow them to shift the operand by an arbitrary number of bits in one operation. In all single-bit shift operations, the bit shifted out of the operand appears on carry-out; the value of the bit shifted into the operand depends on the type of shift.

- Arithmetic shift: the operand is treated as a two's complement integer, meaning that the most significant bit is a "sign" bit and is preserved.

- Logical shift: a logic zero is shifted into the operand. This is used to shift unsigned integers.

- Rotate: the operand is treated as a circular buffer of bits so its least and most significant bits are effectively adjacent.

- Rotate through carry: the carry bit and operand are collectively treated as a circular buffer of bits.

Complex operations

Although an ALU can be designed to perform complex functions, the resulting higher circuit complexity, cost, power consumption and larger size makes this impractical in many cases. Consequently, ALUs are often limited to simple functions that can be executed at very high speeds (i.e., very short propagation delays), and the external processor circuitry is responsible for performing complex functions by orchestrating a sequence of simpler ALU operations.

For example, computing the square root of a number might be implemented in various ways, depending on ALU complexity:

- Calculation in a single clock: a very complex ALU that calculates a square root in one operation.

- Calculation pipeline: a group of simple ALUs that calculates a square root in stages, with intermediate results passing through ALUs arranged like a factory production line. This circuit can accept new operands before finishing the previous ones and produces results as fast as the very complex ALU, though the results are delayed by the sum of the propagation delays of the ALU stages.

- Iterative calculation: a simple ALU that calculates the square root through several steps under the direction of a control unit.

The implementations above transition from fastest and most expensive to slowest and least costly. The square root is calculated in all cases, but processors with simple ALUs will take longer to perform the calculation because multiple ALU operations must be performed.

History

Mathematician John von Neumann proposed the ALU concept in 1945 in a report on the foundations for a new computer called the EDVAC.[1]

The cost, size, and power consumption of electronic circuitry was relatively high throughout the infancy of the information age. Consequently, all serial computers and many early computers, such as the PDP-8, had a simple ALU that operated on one data bit at a time, although they often presented a wider word size to programmers. One of the earliest computers to have multiple discrete single-bit ALU circuits was the 1948 Whirlwind I, which employed sixteen of such "math units" to enable it to operate on 16-bit words.

In 1967, Fairchild introduced the first ALU implemented as an integrated circuit, the Fairchild 3800, consisting of an eight-bit ALU with accumulator.[2] Other integrated-circuit ALUs soon emerged, including four-bit ALUs such as the Am2901 and 74181. These devices were typically "bit slice" capable, meaning they had "carry look ahead" signals that facilitated the use of multiple interconnected ALU chips to create an ALU with a wider word size. These devices quickly became popular and were widely used in bit-slice minicomputers.

Microprocessors began to appear in the early 1970s. Even though transistors had become smaller, there was often insufficient die space for a full-word-width ALU and, as a result, some early microprocessors employed a narrow ALU that required multiple cycles per machine language instruction. Examples of this include the original Motorola 68000, which performed a 32-bit "add" instruction in two cycles with a 16-bit ALU, and the popular Zilog Z80, which performed eight-bit additions with a four-bit ALU.[3] Over time, transistor geometries shrank further, following Moore's law, and it became feasible to build wider ALUs on microprocessors.

Modern integrated circuit (IC) transistors are orders of magnitude smaller than those of the early microprocessors, making it possible to fit highly complex ALUs on ICs. Today, many modern ALUs have wide word widths, and architectural enhancements such as barrel shifters and binary multipliers that allow them to perform, in a single clock cycle, operations that would have required multiple operations on earlier ALUs.

See also

References

- ↑ Philip Levis (November 8, 2004). "Jonathan von Neumann and EDVAC" (PDF). cs.berkeley.edu. pp. 1, 3. Retrieved January 20, 2015.

- ↑ Lee Boysel (2007-10-12). "Making Your First Million (and other tips for aspiring entrepreneurs)". U. Mich. EECS Presentation / ECE Recordings.

- ↑ Ken Shirriff. "The Z-80 has a 4-bit ALU. Here's how it works." 2013.

- Hwang, Enoch (2006). Digital Logic and Microprocessor Design with VHDL. Thomson. ISBN 0-534-46593-5.

- Stallings, William (2006). Computer Organization & Architecture: Designing for Performance (7th ed.). Pearson Prentice Hall. ISBN 0-13-185644-8.

External links

| Wikimedia Commons has media related to Arithmetic logic units. |